Facial Emotion Recognition (Assistive Tech)

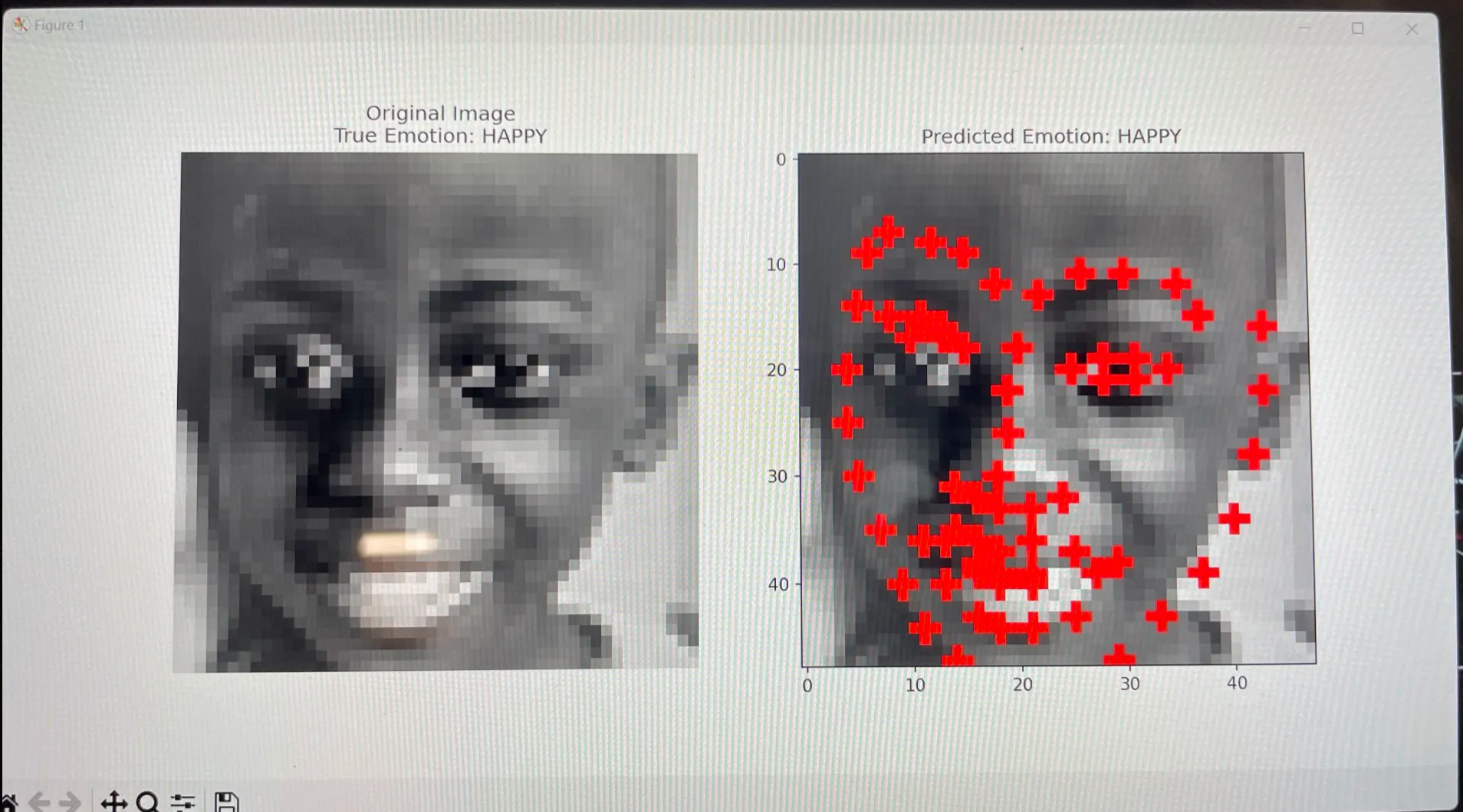

Compared three approaches—CNN on pixels, MLP on pixels, and MLP on landmark-distance features— to classify 5 emotions on 48×48 face crops. Built to understand accuracy vs robustness tradeoffs for real-time, accessible use cases.

TensorFlow/KerasCNNsFeature EngineeringEvaluationAssistive Tech

Timeline: Spring 2023 – Spring 2024 • Presented at Synopsys Science Fair (Spring 2024)

Task

5-class emotion

ANGRY/HAPPY/SAD/SURPRISE/NEUTRAL

Inputs

Pixels + Landmarks

48×48 pixels + landmark-derived features

Models

CNN + 2×MLP

Comparison across pipelines

Interactive case study

Model

CNN on pixels

Best at capturing texture cues; typically strongest accuracy with enough data.

Input

FER-style grayscale faces (48×48)

ANGRY • HAPPY • SAD • SURPRISE • NEUTRAL

Reported performance

~70% validation

From project writeup / prior runs

Architecture (high-level)

This is a visual explanation of the pipeline (not a live inference demo yet).

Tradeoff knob

Slide toward speed or toward accuracy/robustness — updates the recommendation below.

Speed

Robustness

Balanced: CNN is strong, but landmarks can help if domain shift is expected.

What worked well

- Learns spatial features automatically (edges → patterns)

- Good performance when data is consistent

- Works well for end-to-end pipelines

Limitations / gotchas

- More sensitive to lighting/background/domain shift

- Heavier compute than a small MLP

Image

Confusion matrix

Approach

- Baseline: MLP on flattened pixels to set a simple performance floor.

- CNN: learn spatial features directly from 48×48 images (stronger representation).

- Landmarks: feature-engineered distances to reduce sensitivity to background/lighting changes.

- Evaluation mindset: focus on confusion patterns (e.g., angry vs sad), not just overall accuracy.

Results

- Reached 70% validation accuracy on consolidated testing set.

- Received feedback from industry professionals at Synopsys Science Fair.

- Integrated camera model used to assist autistic children with their daily interactions.